Entropy (thermodynamics): Difference between revisions

imported>Paul Wormer No edit summary |

imported>Paul Wormer |

||

| Line 211: | Line 211: | ||

===Boltzmann's formula for entropy=== | ===Boltzmann's formula for entropy=== | ||

Let us consider an isolated system (constant ''U'', ''V'', and ''N''). | Let us consider an isolated system (constant ''U'', ''V'', and ''N''). Traces are taken only over states with energy ''U''. Let there be Ω(''U'', ''V'', ''N'') of these states. This is in general a very large number, for instance for one [[mole]] of a mono-atomic ideal gas consisting of ''N'' = ''N''<sub>A</sub> ≈ 10<sup>23</sup> ([[Avogadro's number]]) it holds that<ref>T. L. Hill, ''An introduction to statistical thermodynamics'', Addison-Wesley, Reading, Mass. (1960) p. 82</ref> | ||

:<math> | :<math> | ||

\Omega(U, V, N) = \left[ \ | \Omega(U, V, N) = \left[ \left( \frac{4\pi m U}{3h^2} \right)^{3/2} \frac{V e^{5/2}}{N^{5/2}}\right]^N | ||

\approx e^{N} \approx 10^{10^{23}}. | \approx e^{N} \approx 10^{10^{23}}. | ||

</math> | </math> | ||

Here ''m'' is the mass of an atom, ''U'' is the total energy of the system, ''h'' is [[Planck's constant]], ''V'' is the | Here ''m'' is the mass of an atom, ''U'' is the total energy of the system, ''h'' is [[Planck's constant]], ''V'' is the volume of the vessel containing the gas, and ''e'' ≈ 2.7. | ||

The sum in the partition function shrinks to a sum over Ω states of energy ''U'', hence | |||

:<math> | |||

Q = \mathrm{Tr}\big[ e^{-\hat{H}/(k_\mathrm{B}T)} \big] = \Omega(U,V,N) e^{-U/(k_\mathrm{B}T)}. | |||

</math> | |||

Likewise, | |||

:<math> | |||

S = - k_\mathrm{B} \mathrm{Tr} \rho \ln\rho = - k_\mathrm{B} \Omega \frac{e^{-U/(k_\mathrm{B}T)}}{Q} \ln\left(\frac{e^{-U/(k_\mathrm{B}T)}}{Q}\right) = - k_\mathrm{B} \ln \frac{1}{\Omega}, | |||

</math> | |||

so that Boltzmann's celebrated equation follows<ref>This equation is on Boltzmann's tombstone</ref> | |||

:<math> | |||

S = k_\mathrm{B} \ln \Omega(U,V,N). \, | |||

</math> | |||

Boltzmann's equation is derived as an average over an ensemble consisting of identical systems of constant energy, number of particles, and volume; such an ensemble is known as a microcanonical ensemble. However, it can be shown that energy fluctuations around the mean energy in a canonical ensemble (constant ''T'') are extremely small, so that taking the trace over only the states of mean energy is a very good approximation. In other words, although Boltzmann's formula does not hold formally for a canonical ensemble, in practice it is a ''very'' good approximation, also for isothermal systems. | |||

==Footnotes== | ==Footnotes== | ||

Revision as of 12:07, 9 November 2009

Entropy is a function of the state of a thermodynamic system. It is a size-extensive[1] quantity, invariably denoted by S, with dimension energy divided by temperature (SI unit: joule/K). Entropy has no analogous mechanical meaning—unlike volume, a similar size-extensive state parameter. Moreover entropy cannot directly be measured, there is no such thing as an entropy meter, whereas state parameters like volume and temperature are easily determined. Consequently entropy is one of the least understood concepts in physics.[2]

Excerpt from Clausius (1865).

Translation: Searching for a descriptive name for S, one could — like it is said of the quantity U that it were the heat and work content of the body — say of the quantity S that it were the transformation content of the body. As I deem it better to derive the names of such quantities — that are so important for science — from the antique languages, so that they can be used without modification in all modern languages, I propose to call the quantity S the entropy of the body, after the Greek word for transformation, ἡ τροπή. I have deliberately constructed the word entropy to resemble as much as possible the word energy, since both quantities to be named by these words are so closely related in their physical meaning that a certain similarity in their names seems appropriate to me.

The state variable "entropy" was introduced by Rudolf Clausius in 1865,[3] see the inset for his text, when he gave a mathematical formulation of the second law of thermodynamics.

The traditional way of introducing entropy is by means of a Carnot engine, an abstract engine conceived in 1824 by Sadi Carnot[4] as an idealization of a steam engine. Carnot's work foreshadowed the second law of thermodynamics. The "engineering" manner—by an engine—of introducing entropy will be discussed below. In this approach, entropy is the amount of heat (per degree kelvin) gained or lost by a thermodynamic system that makes a transition from one state to another. The second law states that the entropy of an isolated system increases in spontaneous (natural) processes leading from one state to another, whereas the first law states that the internal energy of the system is conserved.

In 1877 Ludwig Boltzmann[5] gave a definition of entropy in the context of the kinetic gas theory, a branch of physics that developed into statistical thermodynamics. Boltzmann's definition of entropy was furthered by John von Neumann[6] to a quantum statistical definition. The quantum statistical point of view, too, will be reviewed in the present article. In the statistical approach the entropy of an isolated (constant energy) system is kB logΩ, where kB is Boltzmann's constant, Ω is the number of different wave functions ("microstates") of the system belonging to the system's energy (Ω is the degree of degeneracy, the probability that a state is described by one of the Ω wave functions, is in one of the Ω microstates), and the function log stands for the natural (base e) logarithm.

Not satisfied with the engineering type of argument, the mathematician Constantin Carathéodory gave in 1909 a new axiomatic formulation of entropy and the second law of thermodynamics.[7] His theory was based on Pfaffian differential equations. His axiom replaced the earlier Kelvin-Planck and the equivalent Clausius formulation of the second law and did not need Carnot engines. Carathéodory's work was taken up by Max Born,[8] and it is treated in a few textbooks.[9] Since it requires more mathematical knowledge than the traditional approach based on Carnot engines, and since this mathematical knowledge is not needed by most students of thermodynamics, the traditional approach is still dominant in the majority of introductory works on thermodynamics.

Traditional definition

The state (a point in state space) of a thermodynamic system is characterized by a number of variables, such as pressure p, temperature T, amount of substance n, volume V, etc. Any thermodynamic parameter can be seen as a function of an arbitrary independent set of other thermodynamic variables, hence the terms "property", "parameter", "variable" and "function" are used interchangeably. The number of independent thermodynamic variables of a system is equal to the number of energy contacts of the system with its surroundings.

An example of a reversible (quasi-static) energy contact is offered by the prototype thermodynamical system, a gas-filled cylinder with piston. Such a cylinder can perform work on its surroundings,

where dV stands for a small increment of the volume V of the cylinder, p is the pressure inside the cylinder and DW stands for a small amount of work. Work by expansion is a form of energy contact between the cylinder and its surroundings. This process can be reverted, the volume of the cylinder can be decreased, the gas is compressed and the surroundings perform work DW = pdV < 0 on the cylinder.

The small amount of work is indicated by D, and not by d, because DW is not necessarily a differential of a function. However, when we divide DW by p the quantity DW/p becomes obviously equal to the differential dV of the differentiable state function V. State functions depend only on the actual values of the thermodynamic parameters (they are local in state space), and not on the path along which the state was reached (the history of the state). Mathematically this means that integration from point 1 to point 2 along path I in state space is equal to integration along a different path II,

The amount of work (divided by p) performed reversibly along path I is equal to the amount of work (divided by p) along path II. This condition is necessary and sufficient that DW/p is the differential of a state function. So, although DW is not a differential, the quotient DW/p is one.

Reversible absorption of a small amount of heat DQ is another energy contact of a system with its surroundings; DQ is again not a differential of a certain function. In a completely analogous manner to DW/p, the following result can be shown for the heat DQ (divided by T) absorbed reversibly by the system along two different paths (along both paths the absorption is reversible):

(1)

Hence the quantity dS defined by

is the differential of a state variable S, the entropy of the system. In the next subsection equation (1) will be proved from the Kelvin-Planck principle. Observe that this definition of entropy only fixes entropy differences:

Note further that entropy has the dimension energy per degree temperature (joule per degree kelvin) and recalling the first law of thermodynamics (the differential dU of the internal energy satisfies dU = DQ − DW), it follows that

(For convenience sake only a single work term was considered here, namely DW = pdV, work done by the system). The internal energy is an extensive quantity. The temperature T is an intensive property, independent of the size of the system. It follows that the entropy S is an extensive property. In that sense the entropy resembles the volume of the system. We reiterate that volume is a state function with a well-defined mechanical meaning, whereas entropy is introduced by analogy and is not easily visualized. Indeed, as is shown in the next subsection, it requires a fairly elaborate reasoning to prove that S is a state function, i.e., that equation (1) holds.

Proof that entropy is a state function

Equation (1) gives the sufficient condition that the entropy S is a state function. The standard proof of equation (1), as given now, is physical, by means of an engine making Carnot cycles, and is based on the Kelvin-Planck formulation of the second law of thermodynamics.

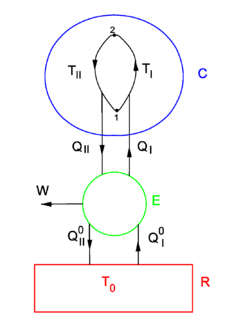

Consider the figure. A system, consisting of an arbitrary closed system C (only heat goes in and out) and a reversible heat engine E, is coupled to a large heat reservoir R of constant temperature T0. The system C undergoes a cyclic state change 1-2-1. Since no work is performed on or by C, it follows that

For the heat engine E it holds (by the definition of thermodynamic temperature) that

Hence

From the Kelvin-Planck principle it follows that W is necessarily less or equal zero, because there is only the single heat source R from which W is extracted. Invoking the first law of thermodynamics we get,

so that

Because the processes inside C and E are assumed reversible, all arrows can be reverted and in the very same way it is shown that

so that equation (1) holds (with a slight change of notation, subscripts are transferred to the respective integral signs):

Quantum statistical entropy

Density operator

In his book[6]John von Neumann introduced into quantum mechanics the density operator for a system of which the state is only partially known. He considered the situation that only certain real numbers pm are known corresponding to a complete set of orthonormal states | m ⟩, with m = 0, 1, 2, …, ∞. The quantity pm is the probability that state |m⟩ is occupied, or in other words, it is the percentage of systems in a (very large) ensemble of identical systems that are in the state |m⟩. As is usual for probabilities, they are normalized to unity,

The averaged value of a property with quantum mechanical operator of a system described by the probabilities pm is given by the ensemble average,

where is the usual quantum mechanical expectation value.

The expression for ⟨⟨P ⟩⟩ can be written as a trace of an operator product. First define the density operator;

then it follows that

Indeed,

where ⟨ m | n ⟩ = δmn, the Kronecker delta.

A density operator has unit trace

Closed isothermal system

For a thermodynamic system of constant temperature (T), volume (V), and number of particles (N), von Neumann considered eigenstates of the energy operator , the Hamiltonian of the total system,

He assumed that pm is proportional to the Boltzmann factor, with the proprotrioality constant K determined by normalization,

where kB is the Boltzmann constant. It is common to designate the partition function of the system of constant T, N, and V by Q,

Hence, using that

it is found

where it used that the set of states is complete—give rise to the following resolution of the identity operator,

In summary, the canonical ensemble[10] average of a property with quantum mechanical operator is given by

Internal energy

The quantum statistical expression for internal energy is

From

follows

The quantum statistical expression for the internal energy U becomes

where it is used that a scalar may be taken of the trace and that the density operator is of unit trace.

In classical thermodynamics the internal energy is related to the entropy S and the Helmholtz free energy A by

Define

and accordingly

and

In summary,

which agrees with the quantum statistical expression for U, which in turn means that the definitions (1) of the entropy operator and Helmholtz free energy operator are consistent.

Note that neither the entropy nor the free energy are given by an ordinary quantum mechanical operator, both depend on the temperature through the partition function Q. Furthermore Q is defined as a trace:

and thus samples the whole (Hilbert) space containing the state vectors | m ⟩. Almost all quantum mechanical operators that represent observable (physical) quantities have a classical (electromagnetic or mechanical) counterpart. Clearly the entropy operator lacks such a parallel definition, and this is probably the main reason why entropy is a concept that is difficult to comprehend

Boltzmann's formula for entropy

Let us consider an isolated system (constant U, V, and N). Traces are taken only over states with energy U. Let there be Ω(U, V, N) of these states. This is in general a very large number, for instance for one mole of a mono-atomic ideal gas consisting of N = NA ≈ 1023 (Avogadro's number) it holds that[11]

Here m is the mass of an atom, U is the total energy of the system, h is Planck's constant, V is the volume of the vessel containing the gas, and e ≈ 2.7.

The sum in the partition function shrinks to a sum over Ω states of energy U, hence

Likewise,

so that Boltzmann's celebrated equation follows[12]

Boltzmann's equation is derived as an average over an ensemble consisting of identical systems of constant energy, number of particles, and volume; such an ensemble is known as a microcanonical ensemble. However, it can be shown that energy fluctuations around the mean energy in a canonical ensemble (constant T) are extremely small, so that taking the trace over only the states of mean energy is a very good approximation. In other words, although Boltzmann's formula does not hold formally for a canonical ensemble, in practice it is a very good approximation, also for isothermal systems.

Footnotes

- ↑ A size-extensive property of a system becomes x times larger when the system is enlarged by a factor x, provided all intensive parameters remain the same upon the enlargement. Intensive parameters, like temperature, density, and pressure, are independent of size.

- ↑ It is reported that in a conversation with Claude Shannon, John (Johann) von Neumann said: "In the second place, and more important, nobody knows what entropy really is [..]”. M. Tribus, E. C. McIrvine, Energy and information, Scientific American, vol. 224 (September 1971), pp. 178–184.

- ↑ R. J. E. Clausius, Über verschiedenen für die Anwendung bequeme Formen der Hauptgleichungen der Mechanischen Wärmetheorie [On several forms of the fundamental equations of the mechanical theory of heat that are useful for application], Annalen der Physik, (is Poggendorff's Annalen der Physik und Chemie) vol. 125, pp. 352–400 (1865). Around the same time Clausius wrote a two-volume treatise: R. J. E. Clausius, Abhandlungen über die mechanische Wärmetheorie [Treatise on the mechanical theory of heat], F. Vieweg, Braunschweig, (vol I: 1864, vol II: 1867); Google books (contains two volumes). The 1865 Annalen paper was reprinted in the second volume of the Abhandlungen and included in the 1867 English translation.

- ↑ S. Carnot, Réflexions sur la puissance motrice du feu et sur les machines propres à développer cette puissance (Reflections on the motive power of fire and on machines suited to develop that power), Chez Bachelier, Paris (1824).

- ↑ L. Boltzmann, Über die Beziehung zwischen dem zweiten Hauptsatz der mechanischen Wärmetheorie und der Wahrscheinlichkeitsrechnung respektive den Sätzen über das Wärmegleichgewicht, [On the relation between the second fundamental law of the mechanical theory of heat and the probability calculus with respect to the theorems of heat equilibrium] Wiener Berichte vol. 76, pp. 373-435 (1877)

- ↑ 6.0 6.1 J. von Neumann, Mathematische Grundlagen der Quantenmechanik, [Mathematical foundation of quantum mechanics] Springer, Berlin (1932)

- ↑ C. Carathéodory, Untersuchungen über die Grundlagen der Thermodynamik [Investigation on the foundations of thermodynamics], Mathematische Annalen, vol. 67, pp. 355-386 (1909).

- ↑ M. Born, Physikalische Zeitschrift, vol. 22, p. 218, 249, 282 (1922)

- ↑ H. B. Callen, Thermodynamics and an Introduction to Thermostatistics. John Wiley and Sons, New York, 2nd edition, (1965); E. A. Guggenheim, Thermodynamics, North-Holland, Amsterdam, 5th edition (1967)

- ↑ A large number of systems with constant T, V, and N is known as a canonical ensemble; the term is due to Willard Gibbs.

- ↑ T. L. Hill, An introduction to statistical thermodynamics, Addison-Wesley, Reading, Mass. (1960) p. 82

- ↑ This equation is on Boltzmann's tombstone

References

- M. W. Zemansky, Kelvin and Carathéodory—A Reconciliation, American Journal of Physics Vol. 34, pp. 914-920 (1966) [1]

![{\displaystyle \langle \langle {\hat {P}}\,\rangle \rangle =\mathrm {Tr} {\big [}{\hat {P}}{\hat {\rho }}{\big ]}.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/fefcbccffb78a69ce163d89ac96e22e852e0e689)

![{\displaystyle \mathrm {Tr} \left[{\hat {P}}\,{\hat {\rho }}\,\right]\equiv \sum _{m}\langle m\,|{\hat {P}}\,{\hat {\rho }}\,|m\,\rangle =\sum _{nm}\langle \,m\,|\,n\rangle \,p_{n}\,\langle \,n|{\hat {P}}\,|\,m\,\rangle =\sum _{nm}p_{n}\delta _{mn}\langle n\,|\,{\hat {P}}\,|\,m\rangle =\sum _{m}p_{m}\langle m\,|\,{\hat {P}}\,|\,m\rangle =\langle \langle {\hat {P}}\,\rangle \rangle ,}](https://wikimedia.org/api/rest_v1/media/math/render/svg/e063d5d683bfe7f3e8190b96a99f988f7a20026f)

![{\displaystyle p_{m}=Ke^{-E_{m}/(k_{\mathrm {B} }T)}\quad {\hbox{with}}\quad K\sum _{m}e^{-E_{m}/(k_{\mathrm {B} }T)}=1\Longrightarrow K=\left[\sum \limits _{m}e^{-E_{m}/(k_{\mathrm {B} }T)}\right]^{-1},}](https://wikimedia.org/api/rest_v1/media/math/render/svg/fc2404a7012d6850f599af6e9c84a442c21877ee)

![{\displaystyle {\hat {\rho }}={\frac {1}{Q}}\sum _{m}|m\rangle \langle m|e^{-{\hat {H}}/(k_{\mathrm {B} }T)}\,|\,m\rangle \langle m|={\frac {1}{Q}}\sum _{mn}|m\rangle \langle m|e^{-{\hat {H}}/(k_{\mathrm {B} }T)}\,|\,n\rangle \langle n|={\frac {\exp[-{\hat {H}}/(k_{\mathrm {B} }T)]}{Q}},}](https://wikimedia.org/api/rest_v1/media/math/render/svg/eb9788f5868fe9578e0121f5c66c2a89073125e4)

![{\displaystyle \langle \langle {\hat {P}}\,\rangle \rangle =\mathrm {Tr} {\big [}{\hat {P}}{\hat {\rho }}{\big ]}={\frac {1}{Q}}\mathrm {Tr} {\big [}{\hat {P}}e^{-{\hat {H}}/(k_{\mathrm {B} }T)}{\big ]}.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/aceb0cda248aa30e427b6f92449d63c0bfd71dd3)

![{\displaystyle U\equiv \langle \langle {\hat {H}}\,\rangle \rangle =\mathrm {Tr} {\big [}{\hat {H}}{\hat {\rho }}{\big ]}={\frac {1}{Q}}\mathrm {Tr} {\big [}{\hat {H}}e^{-{\hat {H}}/(k_{\mathrm {B} }T)}{\big ]}.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/b31df1517b81c845d185d982766e5cd742be9aa3)

![{\displaystyle \ln {\hat {\rho }}=\ln {\big [}e^{-{\hat {H}}/(k_{\mathrm {B} }T)}/Q{\big ]}=-{\hat {H}}/(k_{\mathrm {B} }T)-{\hat {1}}\,\ln Q}](https://wikimedia.org/api/rest_v1/media/math/render/svg/d878511da302490702c2dcd36f04267759d6b197)

![{\displaystyle U=\mathrm {Tr} \left[-k_{\mathrm {B} }T{\big (}\ln {\hat {\rho }}+{\hat {1}}\ln Q{\big )}{\hat {\rho }}\right]=-T\;k_{\mathrm {B} }\;\mathrm {Tr} [{\hat {\rho }}\ln {\hat {\rho }}]-T\;k_{\mathrm {B} }\;\ln(Q),}](https://wikimedia.org/api/rest_v1/media/math/render/svg/4efaf6a158902fc869635bf15770f6ed74a40794)

![{\displaystyle S\equiv \langle \langle {\hat {S}}\,\rangle \rangle =\mathrm {Tr} [{\hat {S}}{\hat {\rho }}]=-k_{\mathrm {B} }\,\mathrm {Tr} [{\hat {\rho }}\ln {\hat {\rho }}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/c81a54b3acfb88512a74cb5e2398748340f6d77a)

![{\displaystyle A\equiv \langle \langle {\hat {A}}\,\rangle \rangle =-\mathrm {Tr} [{\hat {A}}{\hat {\rho }}]=-k_{\mathrm {B} }\,T\;\ln(Q)\mathrm {Tr} [{\hat {\rho }}]=-k_{\mathrm {B} }\,T\;\ln(Q).}](https://wikimedia.org/api/rest_v1/media/math/render/svg/f970cbefc2cbc66553e3db0f4554bdd855da0d53)

![{\displaystyle U=TS+A=-k_{\mathrm {B} }\,T\,\mathrm {Tr} [{\hat {\rho }}\ln {\hat {\rho }}]-k_{\mathrm {B} }\,T\;\ln(Q),}](https://wikimedia.org/api/rest_v1/media/math/render/svg/d00b1603465aa455f68f2b920ae237459aea62a5)

![{\displaystyle Q=\mathrm {Tr} [e^{-{\hat {H}}/(k_{\mathrm {B} }\,T)}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/328f8e8e3411fd5f9ff3fb6ebcb967531bf7b074)

![{\displaystyle \Omega (U,V,N)=\left[\left({\frac {4\pi mU}{3h^{2}}}\right)^{3/2}{\frac {Ve^{5/2}}{N^{5/2}}}\right]^{N}\approx e^{N}\approx 10^{10^{23}}.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/523d154a81bd861997aa59bfd240e17a01b54ff6)

![{\displaystyle Q=\mathrm {Tr} {\big [}e^{-{\hat {H}}/(k_{\mathrm {B} }T)}{\big ]}=\Omega (U,V,N)e^{-U/(k_{\mathrm {B} }T)}.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/8ef7970005801e4dc32290637ff75fa239e0688d)